Simple data scraping using online tools

Scraping is a set of techniques required to extract information from various formats like the web, PDFs, or scanned images into a file type that can be analyzed further, for example into table formats including comma-separated values (csv) or Microsoft Excel (xls) files.

There are online tools/websites that enable users to extract data from files by converting them. Some of the web based software for simple scraping of PDFs include:

The common steps to converting your PDF file on any of the three platforms are:

a) Upload your PDF file

b) Enter a valid email address. You should be able to access this email address because the converted file will be sent there.

c) Click on the convert button. The web service will momentarily process the file and on completion display a success dialogue box.

d) Open your email address to access your converted document.

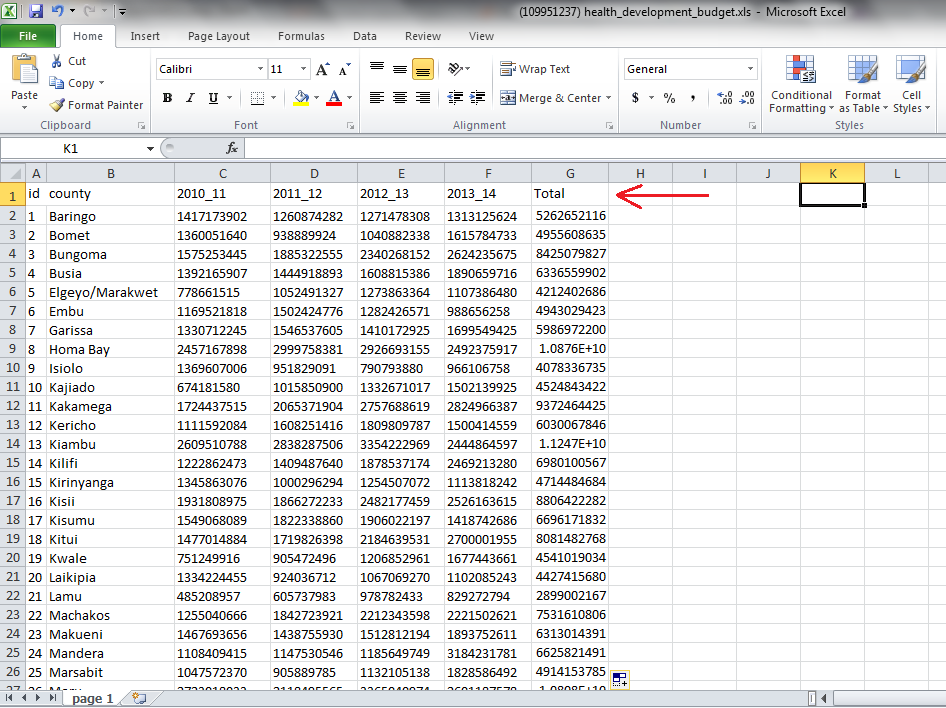

As a practical example, we will try to convert this document which is uploaded on Google Drive. You must have a Google account to access the file. The data set is about projected health development budget estimates from 2011 to 2014. We want to calculate thesum of the total health development county budget from 2010 through to 2014. We will convert the document into an Excel file so that we can use Excels’ sum function to get the total.

1) Download the file from Google Drive.

2) From your browser navigate to http://www.pdftoexcelonline.com/

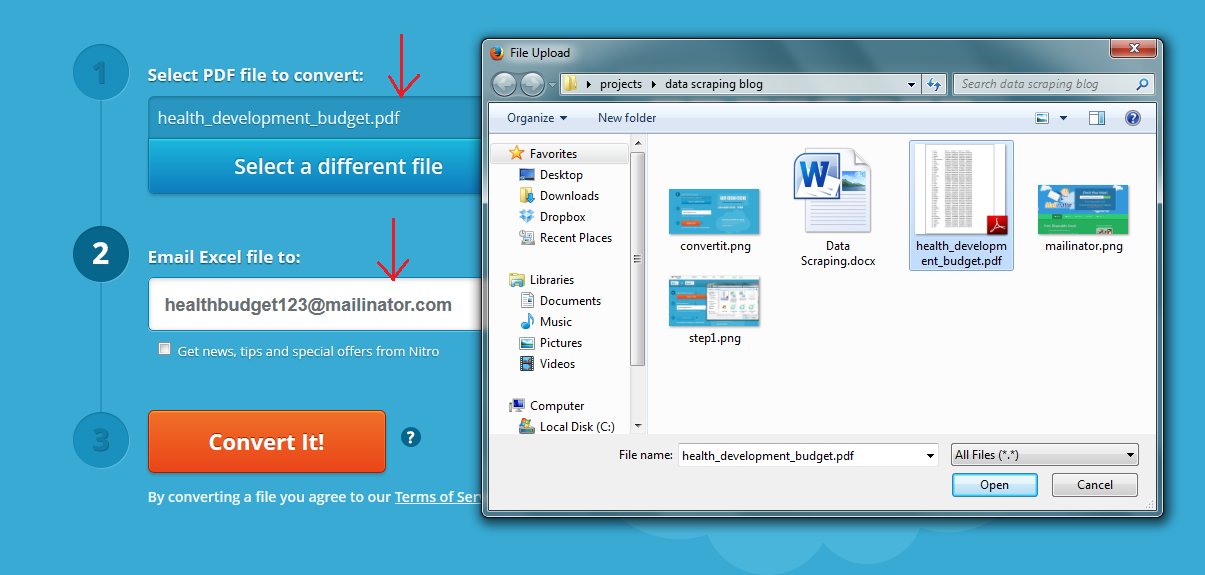

3) Click on the “Select a File” button as shown below. Browse to your saved file and select it. Click open then enter a valid email address to which the converted document will be sent to. You can opt to use a junk email service like http://www.mailinator.com . It enables you to receive emails without signing up. Just enter a random name e.g. [email protected] and click on “Check it.”

Enter the same email address on pdftoexcelonline.com

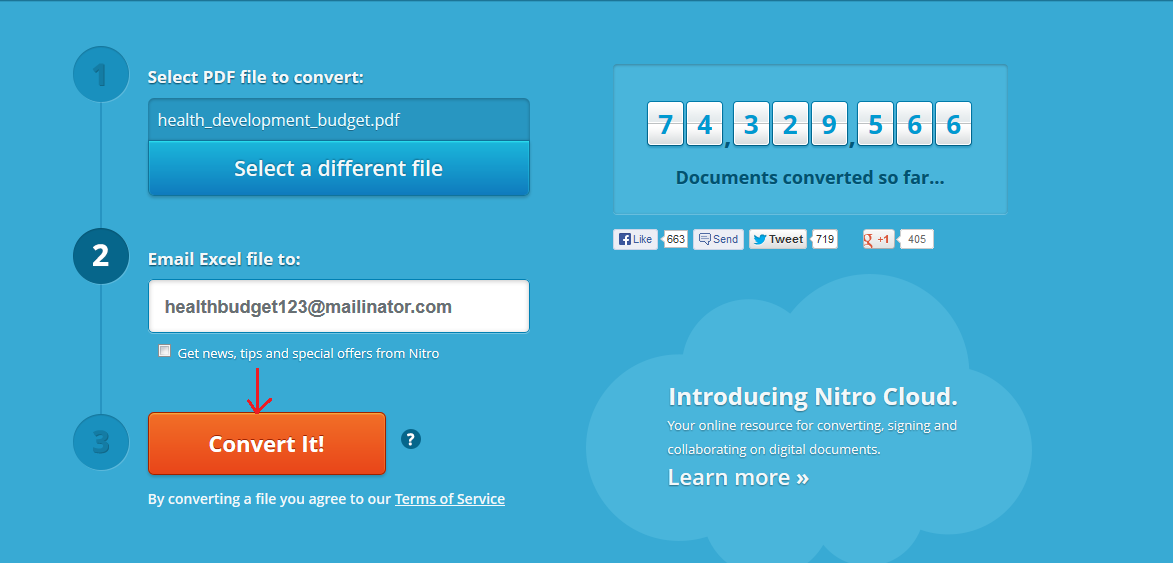

4) Click on Convert it! Button.

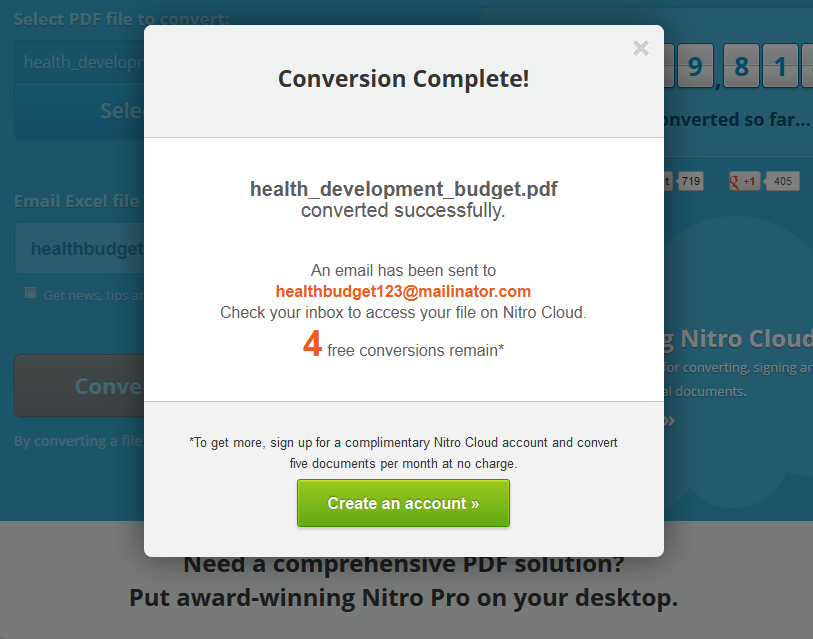

5) The browser will momentarily give a dialogue box to inform you that it is processing your document. Then on completion, you will get a screen like the one below:

6) If you check your mailinator account, you should now have one email in your inbox. The email is from pdftoexcel. It contains the converted document.

7) Download your document by clicking on the link provided.

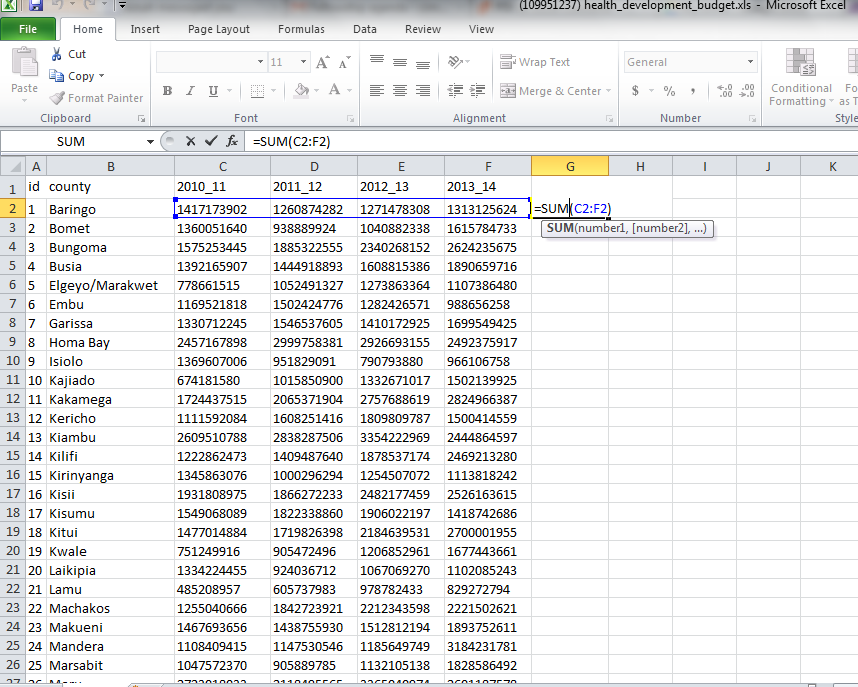

Save the file on your computer. The downloaded file is now in Excel format (.xls) and therefore by opening it in Microsoft Excel, you are able to perform calculations on the dataset.

8) We can test our file by performing a simple sum calculation on the county budgets from 2010 to 2014. Open the file in Excel, then in column G header, input Total or Sum. In cell G2 is where we will perform our calculation. Click on cell G2 then do a sum function like in the diagram below.

9)

10) Hit Enter. You get a Total of county health development budgets from 2010 to 2014 per county. You can now fill down to get the rest of the values.

By converting the data from a PDF format to an Excel format, we were able to add a computational column called Total/Sum. We would not have been able to do this in a PDF file. This is an example of data scraping.